Welcome!

My name is Atell Krasnopolski, and I'm an applied mathematics student, although, as one can see from my projects (listed below), I'm heavily into computer science and related things.

- E-mail: delta_atell@protonmail.com

- GitHub: gojakuch

- GitLab: delta_atell

My Projects

[June-September 2023] Julia for Analysis Grand Challenge [repo]This was my project during my second IRIS-HEP Summer Fellowship program. My mentors for the project were Jerry Ling (Harvard) and Alex Held (UWM).

I later on presented the results of this project at the Julia for High Energy Physics Workshop at Erlangen Centre for Astroparticle Physics in November 2023: "Julia for AGC".

[January 2023 - Present] Phyxel Engine [repo]Phyxel is an open-source 2D physics engine that allows you to run cellular physics simulations (what we call phyxel physics) easily. It is portable and written entirely in C++. You can create games that are similar to the famous detailed world-simulating computer games, as well as cellular automata physics simulations. The set of features includes:

- Basic behaviour of gases, powders, liquids, and solid materials. There are no pre-defined materials, so you can have any set of materials you would like. You define the materials yourself and can provide different masses and properties.

- Temperature system with possible state transitions.

- Adjustable chemistry system that allows detailed interactions between any materials of your choice.

- Custom discrete force field, the direction is defined for each phyxel locally.

- Some imitation of electricity.

- Rigid body system that is compatible with every system above.

- And many more...

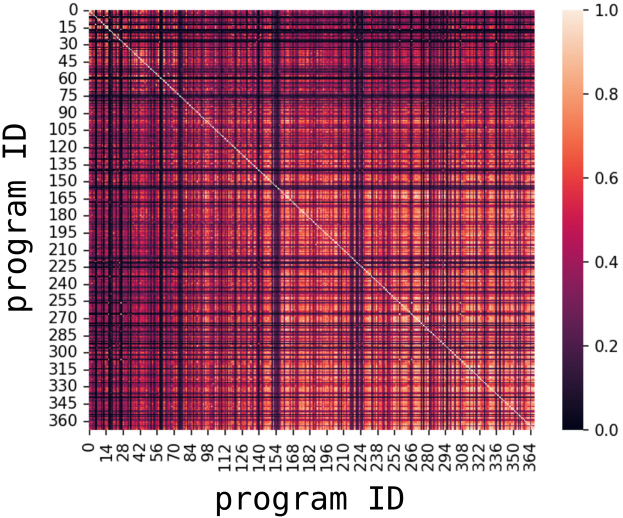

Project has been made for the Introduction to Data Science course at the University of Tartu during my exchange together with my peer Oliver Vainikko (University of Tartu). The approach was based on abstract syntax trees and token vectorisation.

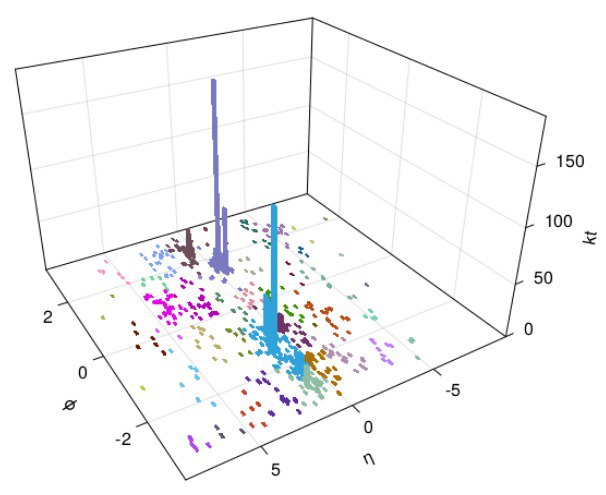

[June-September 2022] Jet Reconstruction with Julia [paper/repo]

visualised results

This was my project during the IRIS-HEP Summer Fellowship program. My mentors for the project were Benedikt Hegner (CERN) and Graeme A Stewart (CERN) and I worked on jet reconstruction with Julia. The idea was to implement some jet reconstruction algorithms (specifically the anti-kt algorithm) in a native Julian way and see how fast can we get with it.

I learned a lot about high energy physics, algorithm optimisation, and met new people at CERN. I also co-authored a paper based on the results of this project: "Polyglot Jet Finding".

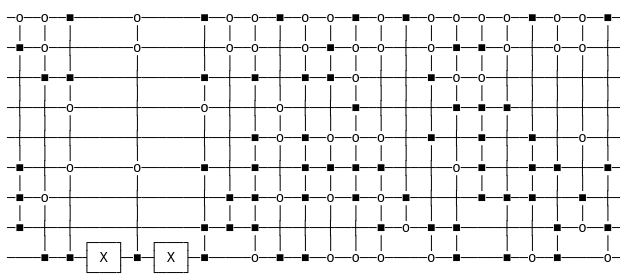

[May 2022] Solving k-SAT Problems with a Combination of Grover's and Schöning's Algorithms [notebook]

In this notebook, I implemented three (actually, even more) approaches to k-SAT problems. These are classical Schoening's algorithm, quantum Grover's algorithm, and a combination of the two. Of course, the main purpose, to my mind, was to shed some light on the combined approach, as the first two are generally mainstream.

Even though this algorithm (combined Schöning's and Grover's approaches) is quite frequently mentioned, I haven't found its implementation, detailed description, or pseudocode anywhere, so I needed to derive it almost entirely. Needless to say that I learned a lot trying to implement it.

Fun fact: someone actually told me they'd used these results in their master/bachelor thesis.

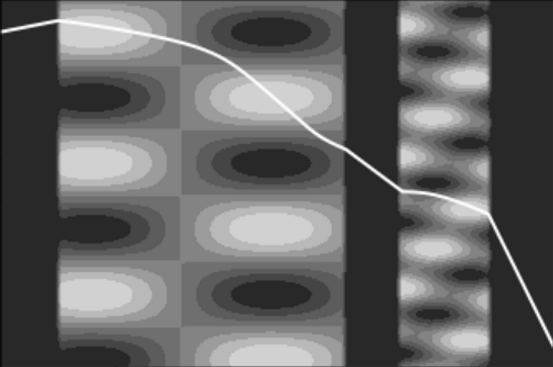

[April 2022] Nonconstant Optical Density Simulation [notebook]

a ray travels

through a nonconstant environment

My friend Petro Zarytskyi and I made a physical simulation based on Snell's law. The idea was to simulate how light can travel through materials with nonconstant optical density or through complex environments in general. In order to apply the classical Snell's law, we think that our ray travels between two separate media with different optical densities and derive a formula for the iterative update of a light particle. More details on the derivation and the implementation of the simulation are here.

[August 2021] Solving 1-dimensional Variational Calculus Problems Numerically with Gradient Descent [text/repo]I had two weeks left before my freshman year at the university so I decided to spend them working on something relatively small. At that time I found out about the Variational Calculus and had an idea for a method for solving 1d tasks numerically using simple techniques that I have intuition for (such as gradient descent, polynomial function approximation, etc.). Not the best method one could use, but I am sort of proud of it, as it sums up many things from my pre-undergraduate self-study.

UPD: I realised a couple of months later that, essentially, this project is a simple Physics-Informed Neural Network that could be designed way better.

[June 2021] The Aetherlang Programming Language and Ether-Dimensional Programming Paradigm [website+docs/repo]I designed a programming paradigm with very unique terminology and implemented an entire programming language named Aetherlang to demonstrate it. The paradigm provides such concept as "dimensions" for easy parallelism and nested routines. There are also no variables and more flow-control features because of the safe "GOTO" statement. The Aetherlang Language has been implemented in the Julia Programming Language but can be more independent from it.

Actually, in retrospective, I would rather define the programming paradigm another way, and thus, would make another language to demonstrate it. The idea would be to postpone as much computations as possible and to make every function call in parallel to the main program flow. However, this is another story.

[March+July 2021] Dynamic Rules On Elementary Cellular Automata [paper/repo]Here, I invented a new type of cellular automata for a talk on Complexity Theory Chat in March 2021. In July I returned to it and wrote a paper with some analysis and further propositions. This website's background has been generated by such automaton (rule 105>>+), by the way. The idea is simple: you make automata more dynamic by introducing second-order rules. This framework, however, turned out to produce very interesting behaviour. You can, for instance, combine two different ECA rules, make some Turing-compatible automata, etc. Again, more about it in the paper.

[July 2020] Lamarckian Evolution Simulation on Intelligent Species [doc/repo]A Lamarckian evolution model simulation with intelligent species that have primitive neural networks as their brains. We (that is my friend who is a biology student and I) have tried to simulate the process of lamarckian evolution, while making the species complex and "intelligent" by giving them a primitive NN with which they decide where to move based on what they see. More on this can be found in the corresponding documentation

[May 2020] Training StyleGAN to Generate Images of Me

me

(yeah, I've changed,

it's an old one :D)

This was probably the first ML-related "project" of mine. I didn't consider it really valuable at first and it was added onto this list later than the other things, because I found out that other people did that too. I trained StyleGAN on a data set of images of my face (and similar people). At some point, I experienced issues fine-tuning the model further so it could only produce low-resolution images. I fixed this problem by using a super-resolution network on every image. Even though I didn't have any mathematical intuition for how such things work at that time, it was a good first experience. The goal of this was to generate a perfect profile picture for me and it served the purpose. For a long time, it had remained my profile picture on Telegram and GitHub, although it became unrepresentative when I changed my haircut.